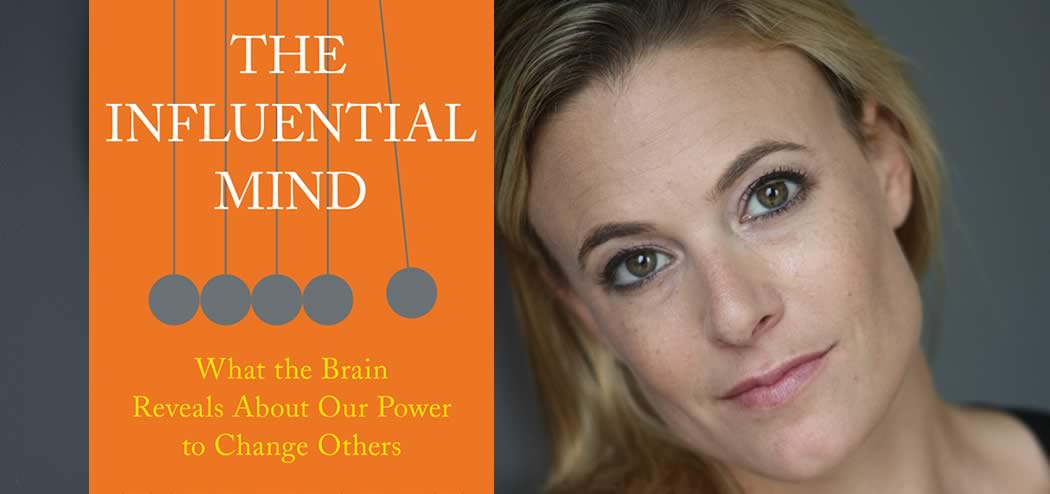

Today’s guest is an expert on decision-making, emotion, and influence. Dr. Tali Sharot has spent the past two decades studying human behavior and conducting dozens of experiments to figure out what causes people to change their decisions, update their beliefs, and rewrite their memories.

An Associate Professor of cognitive neuroscience at University College London—where she directs the Affective Brain Lab—as well as a visiting Professor at MIT, Tali has been featured in or written for the New York Times, Time magazine, The Washington Post, CNN, the BBC, the Today Show, and many others.

Her new book, The Influential Mind: What the Brain Reveals About Our Power to Change Others, explores the nature of influence and the critical role emotion plays in persuasion.

In this episode, Tali shares what research tells us about influencing the human mind. Listen in to learn the most effective ways to persuade people, motivate them to take action, and more.

If you enjoy the show, please drop by iTunes and leave a review while you are feeling the love! Reviews help others discover this podcast and I greatly appreciate them!

Listen in:

Podcast: Play in new window | Download

On Today’s Episode We’ll Learn:

- The most effective approach for motivating people to take action.

- How to use brain synchronization and brain coupling to influence others.

- What holds more power to change people’s minds than data and science.

- Why smart people are more subject to confirmation bias errors.

- The reason emotions are so important.

- How investment decisions are affected by confirmation bias.

- Where to start if we want to change people’s behaviors and/or beliefs.

Key Resources for Tali Sharot:

- Connect with Tali Sharot: Website

- Amazon: The Influential Mind: What the Brain Reveals About Our Power to Change Others

- Kindle: The Influential Mind: What the Brain Reveals About Our Power to Change Others

- Audible: The Influential Mind: What the Brain Reveals About Our Power to Change Others

- Amazon: The Optimism Bias: A Tour of the Irrationally Positive Brain

Share the Love:

If you like The Brainfluence Podcast…

- Never miss an episode by subscribing via iTunes, Stitcher or by RSS

- Help improve the show by leaving a Rating & Review in iTunes (Here’s How)

- Join the discussion for this episode in the comments section below

Full Episode Transcript:

Welcome to the Brainfluence Podcast with Roger Dooley, author, speaker and educator on neuromarketing and the psychology of persuasion. Every week, we talk with thought leaders that will help you improve your influence with factual evidence and concrete research. Introducing your host, Roger Dooley.

Roger: Welcome to the Brainfluence podcast. I’m Roger Dooley. Our guest this week is Tali Sharot. Tali is an associate professor of cognitive neuroscience at University College London where she runs the affective brain lab and she’s currently a visiting professor at MIT. She’s an expert on decision making, emotion and influence. Her work is focused on figuring out what causes people to change their decisions, update their beliefs and rewrite their memories. Her previous book The Optimism Bias was a focus of a Time magazine cover story and her TED Talk on the topic will probably hit the two million view mark by the time you hear this. It was almost there when I checked today. Tali’s new book is the Influential Mind: What the Brain Reveals About our Power to Change Others. Welcome to the show, Tali.

Tali Sharot: Thank you for having me.

Roger: Great. Well, Tali, you start off the Influential Mind with a political story, but I want to backtrack a little bit. It seems like the two big political surprises lately were the Brexit vote and then the election of Donald Trump not long after, and these seem to have been driven by pessimism about the future. Did optimism fail us or were those changes driven by a sense of optimism about what change could bring?

Tali Sharot: I actually think that it is optimism. It’s not maybe your standard optimism, what we’re used to but I do think even in these cases it was optimism. I think if you think about that Trump slogan it is Make America Great Again, right? So, I think in that case people who voted for Trump were voting for a better future, what they believed was a better future for them and their country. I think the same thing in Brexit. Again, it was people who were unhappy with the current situation and they were voting for change because, in their opinion, that change would bring a better life for them and the country, so I don’t think it’s pessimism. I think it is dissatisfaction with the current state of affairs and a belief that there is a possibility for a better way of life or a better political agenda, and of course, the thing with politics is everyone has their own opinion of what’s better and what will bring a better future.

Roger: Right. Well, I guess we can only hope that those decisions in fact justify the optimism, how things turned out. So, Tali, your book is in the sweet spot for our listeners. You start off by contrasting the communication style of Donald Trump and Ben Carson in one particular exchange. I’ll let you tell that story because I think it’ll really resonate with people.

Tali Sharot: Yeah, I think it was a really illustrative example of some of the things that I talk about in the book. During the presidential debate between Ben Carson and Donald Trump, so this was one of the first ones, and they started a conversation about autism and whether there’s a link between autism and vaccines. So, you really had on one end, you had part of the stage, you have Ben Carson who’s a pediatric neuro-surgeon and on the other hand you had Donald Trump who’s not a doctor of any kind, doesn’t have medical expertise. So then, Ben Carson was saying there’s a lot of data and a lot of figures showing that there isn’t a link between autism and childhood vaccines, and he was saying, and Donald Trump on the other hand was saying, well, I know of this child who went to get a vaccine and he got very, very, very ill and then actually ended up having autism, and he was describing in real detail and vividness how there was this really large syringe that was used to vaccinate the kids and then Ben Carson comes up and says well, I think if Trump actually reads the studies and becomes familiar with all of the research and science out there, he will then believe the facts and he will be convinced that there isn’t a link.

So, Carson was using the approach of the scientist which is these are the facts, these are the figures, this is what we need to look at and that’s it. Donald Trump was using a story, right? He was using one incident and he was taking that incident as evidence that there is a link between the two, but he was telling that story with a lot of color and he was using a lot of emotion, and it had quite an effect. If anyone goes, you can look this up online, and watch those few minutes of the debate. It really, you really feel like you straight away have a reaction. When Donald Trump tells that story, you straight away feel like oh, no, and especially when I was watching it, I had my kid, my younger kid was only a few weeks old, and I felt this kind of emotional reaction to that story. And of course, I know that there isn’t a link and I’m a scientist and I’m a neuroscientist, but still even I felt this reaction as well, maybe there is something in something that he says, and that just demonstrates the power of emotion and the power of a story and how in the face of these things just data and science just simply does not have as much power at changing people’s minds.

Roger: And he used a very vivid image, too, the horse syringe which again, I think people can sort of imagine this ridiculously giant syringe which I’m sure is not what they would use for injecting infants but the story combined with the vivid imagery I think makes it more memorable and more convincing. I’ve used some similar stories when I’ve written and spoken about Trump later on in the election where on immigration Hillary Clinton had this detailed nine point program for how to fix the country’s, the US’s immigration policy and it was really kind of wonky with some acronym programs that were going to be extended or changed and your eyes would glaze over by about point number four, where Trump just said I’m going to build a wall, a big wall, and again, that communicates an image in a very emotional way that nobody’s image, I mean, if you check, ask ten people to draw a picture of the wall they were imagining you’d get ten different walls, but nevertheless it was really easy for people to imagine.

But I mean, it’s kind of dismaying I suppose that that sort of technique works. Does it drive you crazy as a scientist that all this data really sort of gets swept away by a simple emotional appeal? And it’s certainly not just Trump, it’s every day we’re doing that.

Tali Sharot: Yeah, it did to begin with and that’s kind of I think maybe one of the reasons that I started being interested in this whole topic was when my previous book, the Optimism Bias came out, I was giving a lot of talks to the public and explaining the science, and I found that whether someone believed me or did not believe me was based on whether what they personally experienced in their life. Did they think that they were optimists? Did they think that other people around them, people in their family were? So, did they have a story to tell? It was those things that determined whether they would believe the science and the science could be hundreds and hundreds of studies not only my own, but many other people’s and I could show all the data, but at the end of the day, what mattered is whether intuitively they felt that it relates to what they know from their own life. And so this did drive me a little bit crazy, but then I thought, well, we should actually accept this. I don’t think we could change it. We need science to figure out what’s true, so Ben Carson is right in saying we need the figures, we need the data to know what’s true.

But then just saying well, we’re just going to show the science. We’re going to show the data, and then people will just believe it because that’s what it is, I think that’s where we fail because we are denying by just doing that, we are denying science because the science tells us that it’s not enough. The science tells us that people will evaluate data and figures based on other things, and we need to take that into account. So we need to take what we know from science, from the data and we need to show that, but we need to show that with the frame that makes sense to be able to get this information to people.

Roger: Right, and there you hit early in the book on confirmation bias and how are brains tend to reject information that doesn’t agree with other preconceived notions and of course, we seek out information that does, and I think one big takeaway from the book is that basically studies show that studies don’t convince people and it’s kind of ironic I guess, but you need studies for the scientists but if you’re going to present arguments to people you need to do it in a different way. Are smart people, logically smart people should be less subject to confirmation bias errors, but is that really true?

Tali Sharot: Yes. A study showed that it’s exactly the opposite, and just to kind of, just backtracking one second, when you said we need studies to show that confirmation bias exists and we need studies to show that the studies are not enough, I think the point is to present the study or present the example where people can relate, can say yes, it’s right, right? I feel like that, too. And if you can find that specific study, that specific story and that specific example that’s helpful. So if we go back to your question about whether intelligence is related to confirmation bias, so no, there’s a study by Dan Cohan at Yale University that shows that actually people with better math and analytical skills are more likely to twist data at will, and this is a little bit concerning for scientists, but let me tell you what he did. So he took 1,000 Americans and the first thing he did is he gave them math tests and logic questions, and based on these tests he divided them into those will high skills and low skills. Then he gave everyone a set of data and he told them this data looks at whether skin treatment is helping with rashes, right?

So this is kind of like a neutral thing. No one has a strong private opinion about this skin treatment, and he said please analyze the data and tell me is it helping or not. So unsurprisingly those with better math skills did better at this job. They could figure it out by looking at the data. Then he gave them another set of data and he said this set of data is looking at whether gun control laws are reducing crime, and in this case the difference was that everyone had a very strong opinion about gun control laws. Some people were in support of the laws and some people were not and everyone was very, very, had a very strong opinion, and that actually interfered with people’s ability to analyze the data.

And it especially interfered with those with math and logic skills. They actually did worse at this task, so it seems that people were using their skills not necessarily to find the truth, but rather to find fault with the data that they weren’t happy with.

Roger: Probably gives them more skill, too, at finding those items that agree with their biases. I think that it’s like a headline writer for a newspaper, given a story, you could write ten different headlines that would create a totally different conclusion from the same story. So I guess that maybe this brings us to a good question that’s not really related to the book, Tali, but is there really a replication crisis in social science and what’s your take on it?

Tali Sharot: Well, I think the replication crisis, I have very strong opinions on what the solution is. I mean, whether there’s a crisis or not is a little bit a way that you, how you define it, right? Some people say well, people who are trying to replicate the specific study or not using the exact same paradigm, but then you would want to have the ability to generalize as well, but I think in order, and then some people say well, the solution is preregistration. So preregister what you’re about to do and then follow exactly what you’re about to do. My opinion is simply that before you publish a study and especially if it’s a behavioral study, so if it’s a study looking at behavioral data because those are relatively easy to collect, simply replicate it in your own lab, right? So, if every time you publish a study you simply replicate it yourself first and then publish those replications together with the original study, then we wouldn’t have such a problem, and I think that’s a solution. So, journals need to say we will only publish studies from labs that can replicate it in the same article.

And I think that’s the first step that needs to be done. Now I think it’s a relatively simple one. Now it becomes a little but more difficult when you’re using methods that are more expensive like neuro-imaging for example or you’re doing very long term studies or you’re doing pharmacological manipulations and those case, it’s not impossible, it just becomes more expensive and more difficult. There’s still some things that you can do, some statistical tests to make sure that the effects are quite strong, but I think at least for the behavioral studies which is in fact what most people are concentrating on when they say replication studies, that’s the solution and that will take us a long way to, will really help in these kind of things not being an issue again.

Roger: Right, and I know that I’ve become more cautious. I base a lot of my stuff, I’m not a scientist myself, but a lot of my writing is based on research and I’ve become more cautious about taking one experiment and sort of running with that and focusing more on the science that’s been replicated in different ways and different places and different times which should mean it’s a lot more robust. So, even in investment decisions can be affected by confirmation bias. Again, that seems like a very sort of logical, rational activity but how does that work?

Tali Sharot: Yeah, so we did one study where we invited people into our lab and we asked them to make financial decisions together, specifically they had to assess real estate and they actually had to put money on whether they think they’re right or wrong. So if they were quite confident that they could bet money on it, and what we found was we recorded their brain activity while they were doing that in two separate MRI scanners, and they could actually communicate with each other via computers while we were scanning their brains, and what we found was when two people agreed their brain activity showed precise encoding of the information coming from the agreeing partner, and everyone’s confidence in their decision was increased, right? Because they agreed. But when they disagreed that’s when the surprise came. When they disagreed metaphorically speaking it looked like the brain was shutting down and it wasn’t encoding, we couldn’t find encoding of the information coming from the disagreeing partner, and what happened to people’s confidence in their own decision? Not much. It didn’t actually go down much.

It kind of stayed the same. So, this shows you how when you learn of an opinion of another person that confirms your decision, that confirms your belief, you’re quick to take that in and it makes you more confident. But when you learn that someone else is saying well, I don’t think that’s right. My opinion is different. You are less likely to take that opinion into your account, more than likely to look at it in a more critical manner, and it’s less likely to actually affect both your confidence and your decision, and if we take this example, this specific study, if we go back to the replication crisis, so this is a study we replicated this behavior quite a few times, so we did this in London and then we did it in Virginia and we kind of show that this behavior is, you can find it again and again and again, so that’s replication.

But on the other hand, the fMRI is so expensive that so far we’ve only done that once, but we two try, like over the years to replicate also fMRI study.

Roger: So, I think if we are trying to change somebody’s mind, the worse thing we can try and do is disprove their current beliefs and instead we should really try and focus on what they might be interested in or what they do believe and build on that, and I think that’s true in like a marketing sense, in a sales, even looking at sales or persuasion situation. Would that be correct?

Tali Sharot: Yeah. So that’s kind of the point that I make in the book in the first chapter when I say well, we know, now that we know this, that we’re less likely to take in information coming from someone who disagrees with us, well that means really that if we want to change people’s behavior, if we want to change even their belief, we should start with common ground, a belief that we have in common, a motivation that we have in common. So for example, let’s go back to the problem that we started with which was the vaccines, right? Where Carson came out and said there’s the data, there isn’t actually a link. You can read the data. You can be convinced. So, parents who decide not to vaccinate their kids because of the alleged link, they are actually, they tend not to change their minds when health professionals come and say look at the data. It’s showing no link. So instead a group of scientists at UCLA said well, could we use a different approach, an approach that doesn’t actually concentrate and focus on what we disagree on, right? On the link to autism.

That’s what they disagree on. So instead of focusing on that, maybe we can focus on something that we agree on, and what they did, they said well, instead we’re going to highlight the benefits of the vaccines which are they are protecting kids from measles, mumps and rubella, from all potentially deadly diseases. And by focusing on that instead, it was something that the parents, they didn’t disagree with this. They fully agreed that that was true, but it seemed to have been forgotten in the heated debate, and so they were focusing on a common belief and also on a common motive because both the doctors and the parents wanted the children to be healthy. And that had a much greater impact, so parents’ intention to vaccinate their kids was increased three times as much than the normal approach of just showing the data that they are wrong.

Roger: Now, actually that’s a good segue, Tali. I think another point that you make later in the book is that certain kinds of arguments are less effective and in imagery even so if you were trying to say just get away from the data in that situation and encourage somebody to get their kid vaccinated, you might be tempted to show pictures of kids that were suffering from diseases that would be prevented by vaccination and sort of emphasize the negative consequences of not getting vaccinated, but I don’t think that’s really wise, is it?

Tali Sharot: Yeah, so there’s a study that was conducted at Stanford and what they wanted to do, they wanted to see what determines whether people will give to charity, and specifically to one of those websites like GoFundMe. I’m not sure that’s the exact website they used, but one of those, and so they wanted to see what will get people to fund and give out to charity more. And they found that the most important thing was whether the request elicited positive emotions, not negative emotions, so for example, if you wanted to get funding for someone who has a disease for example and needs help, a photo of that person in a healthy state smiling would get more donations than a photo of the person looking very ill and in pain in a hospital bed. They found that enhancing triggering positive emotions in the person who was about to contribute makes it more likely that they will give the money and help out rather than triggering sympathy for example or making that person feel bad via empathy.

So, they did that in a few ways. First of all, they had people just rate the different requests to say how positive does it make you feel, how negative does it make you feel, but they also actually used fMRI, so they also scanned people’s brains while they were looking at these different requests. So the requests included both images and a short description, and they found that in the sample of people who were in the MRI, they could use their data to predict how well these requests will do in a different sample online. So, how thousands of other people online will react to these requests, and what predicted that was the signal in the reward center in the brain, the nucleus accumbens. So when people had more of a reaction in the reward center, then that same request was more likely to get funded by thousands of other people that were encountering this request online. So this was done by Brian Knutsen at Stanford University.

Roger: Yeah, I know Brian keeps coming up on this show. I’ve got to get him on here sometime. He’s really done some fascinating work over the years even on the pain of paying, and it seems like the, what I suppose you might call, and you’ll probably shudder when I say this but the donate button in the brain. That’s actually pretty similar to some of the work done at Temple University on when people make a purchase decision. So, I think that’s really fascinating stuff. So, explain about brain synchronization and brain coupling, and is there a way that we can take that knowledge to influence others?

Tali Sharot: Well, I think that is, so this is work done by Uri Hasson at Princeton University and others as well, and it’s just one explanation for why emotion has an effect in influencing others, and there’s other explanations as well, so the basic idea is that anything that arouses some kind of an emotion makes you A, more likely to attend to it, right? So, when something emotional happens we tend to attend to that thing. We tend to remember it better, and that makes sense because an emotion is basically, it’s a signal in our brain saying this is important, right? And if something is important you want to recruit the rest of your brain to process that thing. So if you can manage to elicit arousal or emotion in other people, they’re more likely to listen, more likely to remember, but what the research on kind of synchronization suggests that not only are you getting people to attend but by putting them in a certain emotional state they’re more likely to process everything that you say in a similar manner to you.

Let me explain. So let’s say you are in a happy state, but I am in a sad state. Then whatever you’re saying I’m not going to process it similarly to you, right? Because I’m looking at it from a different point of view, but if you are able first to put me in a similar state as you, let’s say in a happy state, it’ll be easier for me to process whatever you say later in a similar way to you and the way, and that’s kind of reflected also in the activity that you can see in people’s brains. But I think the important thing about emotion is that emotion itself carries information, right? The reason that your emotion affects me so fast for example, if you’re stressed I’m more likely to be stressed if you’re next to me, right? If I’m happy, you’re likely to be happy, and the reason that we have this emotion contagion is because emotion carries an important signal.

So for example, if you see someone and that person is afraid, you will instantly become afraid, too, and that’s a good thing. Why? Because if they’re afraid, there might be something dangerous in the environment, and if you then feel afraid too you’re more likely to look and try and figure out if there is something dangerous, to try to look around or if for example, someone next to you is excited, that conveys to you that maybe there’s a reward in your environment. So if they’re excited, you’re more likely to be excited and then you’re more likely to scan your surroundings for reward.

So emotions are really at the basic. They are signals. They convey information about what’s happening around us, and that’s really why it’s adaptive for us to have this emotional contagion.

Roger: Well, Tali, I know you give speeches. Have you been able to apply this at all when you are planning what to say or how to deliver it?

Tali Sharot: Yeah, I think it’s well-known that any kind of speech that can elicit any kind of emotion, people will listen more, they will be engaged more, they will get the message better, and I, my preferred emotion is humor or surprise. That’s more my style, but you can see other people eliciting well, I guess maybe even sadness or other, I mean, that you could think of politicians that try to elicit fear, right? So, it’s emotions are definitely important because if a speech or even a talk like a dry scientific talk, if it doesn’t create some kind of surprise or some kind of hope in the people who are listening, they are less likely to take in what the person is saying.

Roger: Yeah, you cite Kennedy’s speech about the moon as an example of a speech that moved the world more or moved the country at least, and was extremely effective.

Tali Sharot: Yeah, I think, I mean, one of the interesting things to me about thinking about emotions and positive and negative is actually the association that I talk about in chapter three about the relationship between rewards and punishments and how they affect motivation in different ways. So, one thing that neuro-scientific research has shown in that when people expect a reward, let’s say you expect to get money or you expect to get positive feedback, you’re more likely to act, and when you expect punishment, like you expect to lose money, people are actually more likely to not act, to stay still, and the reason for that is that usually in life to get the good stuff whether it’s a chocolate cupcake or promotion or love we need to act. We need to move forward, and so our brain has adapted to this environment where the reward system is connected to our motor system, and when we expect something good, when we expect a reward, we act faster.

But on the other hand, to avoid the bad stuff in life, not all but a lot of the time, we actually need to stay still. So if you want to avoid poison or deep waters or untrustworthy people usually all you need to do is just not get close, just to stay where you are and not do anything, not take the risk, not act. So our brain has adapted to that environment and when we expect something bad, there’s what’s called a no-go signal in our brain and it inhibits action. And so what that means really is that if you want to motivate people to do something, you want to motivate them to produce a star report, you want to motivate them to go out and vote, trying to convey what the reward would be is better than trying to threaten them with warning. But if you want someone not to do something, if you want them not to share privileged information, if you want them not to use the company resources for their own personal benefits, the threat of a punishment can actually be more effective.

I think that’s just like an interesting example of how neuroscience was very helpful in highlighting what one can do to motivate people.

Roger: You cover a lot of territory in the book, Tali. One section that was interesting was the curiosity part that we just had Mario Livio on the show who wrote an entire book on curiosity, and you devote a chapter to why we’re programmed to seek out new information, but it isn’t necessarily a very good program because we aren’t always looking for information that is really important to us. We’ll ignore safety instructions on an airplane that could be quite vital but click on a link that promises to show us celebrity wardrobe malfunctions or something. What drives our attraction to come information and not others?

Tali Sharot: So, in general we are curious creatures. We like to find out new stuff. We like to increase our knowledge and this is why the internet is so successful, why social media is so successful because there’s information coming in all the time, and there’s really nice studies by Ethan Bromberg-Martin at Columbia and he shows that monkeys are the same, so monkeys actually would like to know information in advance. For example, they want to know am I going to get a large reward or a small reward. They’re going to get a lot or order or just a little bit, and they want to know so badly that they’re willing to pay for it, so you can train them and figure this out, and what he found is that the same neurons in the brain and the same rules that underlie how we process real rewards like how food and water, the same system signals also the opportunity to gain information. So it’s as if the brain is treating information as as if it was a reward in and of itself.

And so it’s driving us to get more and more information at the same way that it’s driving us to get other rewards like sex and food. However, there is one caveat to this which we have looked at recently, and that is that there, not all information is treated like a reward in the brain. Some information is actually treated like an aversive outcome, like shocks, and that is information that we suspect is going to bring bad news. So, when we suspect we might find something bad behind the door, we sometimes just want to keep the door shut and not have a look, and you can see that in life, right? People will avoid medical screenings, even though conditions can be treatable, even though it could save their lives, but we sometimes avoid going to the doctor because we just don’t want to know.

There are many other things that we try to avoid, bad reviews of our work. We just don’t want to know a lot of the times. And this is, it doesn’t mean that we never go to medical screenings. We do, but less so than when we expect good news, right? If we expect the doctor to tell us oh, you’re absolutely healthy, then we kind of run to the doctor.

Roger: I suppose that explains why if I hear that the stock market went way up today, I’m much more likely to check my portfolio than if I heard there was a big loss on the stock market today. I just avoid the bad news.

Tali Sharot: Right. So that’s a great study by free behavioral economists Carlton, Lowenstein and Seppia, they show that when the market is up people believe that their stocks went up so they’re more likely to check on their accounts without any intention of making a transaction, just to have a little kind of sniff of the good news, yeah? And when the market goes down, they’re less likely to look at their accounts because they think well, my value probably went down and I just don’t want to know. And so they show this and there is one exception. The exception is when things are so bad that negative news can’t really be avoided, so for example when there’s a really market, when the market collapsed, that’s when people log in frantically, right? Just with the hope that maybe they have survived and it’s not that bad. But yeah. That’s a really interesting study.

Roger: Right, and perhaps under those conditions they might want to take some action where a daily increase or decrease might not really affect your decision making that much, but when, if the market is really cratering then you might, which of course, is the wrong time to do something, then sell your stocks when everybody else is trying to sell their stocks, but I think history shows that’s exactly what happens. So, Tali, put you on the spot here, how else in the influence science area do you think is doing good work today or really good work today, like who else should our listeners be checking out?

Tali Sharot: The authors of Nudge, Cass Einstein and Richard Thalor. So Cass Einstein is a class collaborator and he does fabulous work, and I’m going to give you a few names that are not, I don’t see myself as studying influence. The word influence is in my book and it talks about it but my research really what it’s trying to do is it’s trying to figure out how people form beliefs and how they make decisions, and I was saying well, if we know all of this, we could probably use this to help create positive change, right? So that’s the idea, so I think in many cases, the best insights will come from people who you won’t find on the website the word influence and none of their research will have the word influence, but you will gain great insight from people who can give you knowledge of how people make decisions, of how people interact with other people and things like that.

So Michael Milligan at Harvard Business School is another person who’s doing great work, and George Lowenstein.

Roger: At Carnegie Mellon.

Tali Sharot: Yeah. Another great behavioral economist. Of course, Donna. We all know Donna.

Roger: Right. Well, there’s, I’ve got to get Lowenstein on the show, too. I’ve spoken to him but haven’t had him on the show, so anyway, I’ll be respectful of your time here, Tali. I’ll remind our audience that we are speaking with Tali Sharot, neuroscientist at the University College of London and MIT and author of the new book The Influential Mind: What the Brain Reveals about Our Power to Change Others. Tali, how can listeners find you and your content online?

Tali Sharot: So my lab is affectivebrain.com and my book is The Influential Mind. You can find it on Amazon.

Roger: Okay, great. Well, we will link to both those places and any other resources we talked about during the show on the show notes page at rogerdooley.com/podcast and as usual we’ll have a full text version of our conversation there as well. Tali, thanks for being on the show.

Tali Sharot: Okay. Thank you so much for having me.

Thank you for joining me for this episode of the Brainfluence Podcast. To continue the discussion and to find your own path to brainy success, please visit us at RogerDooley.com.